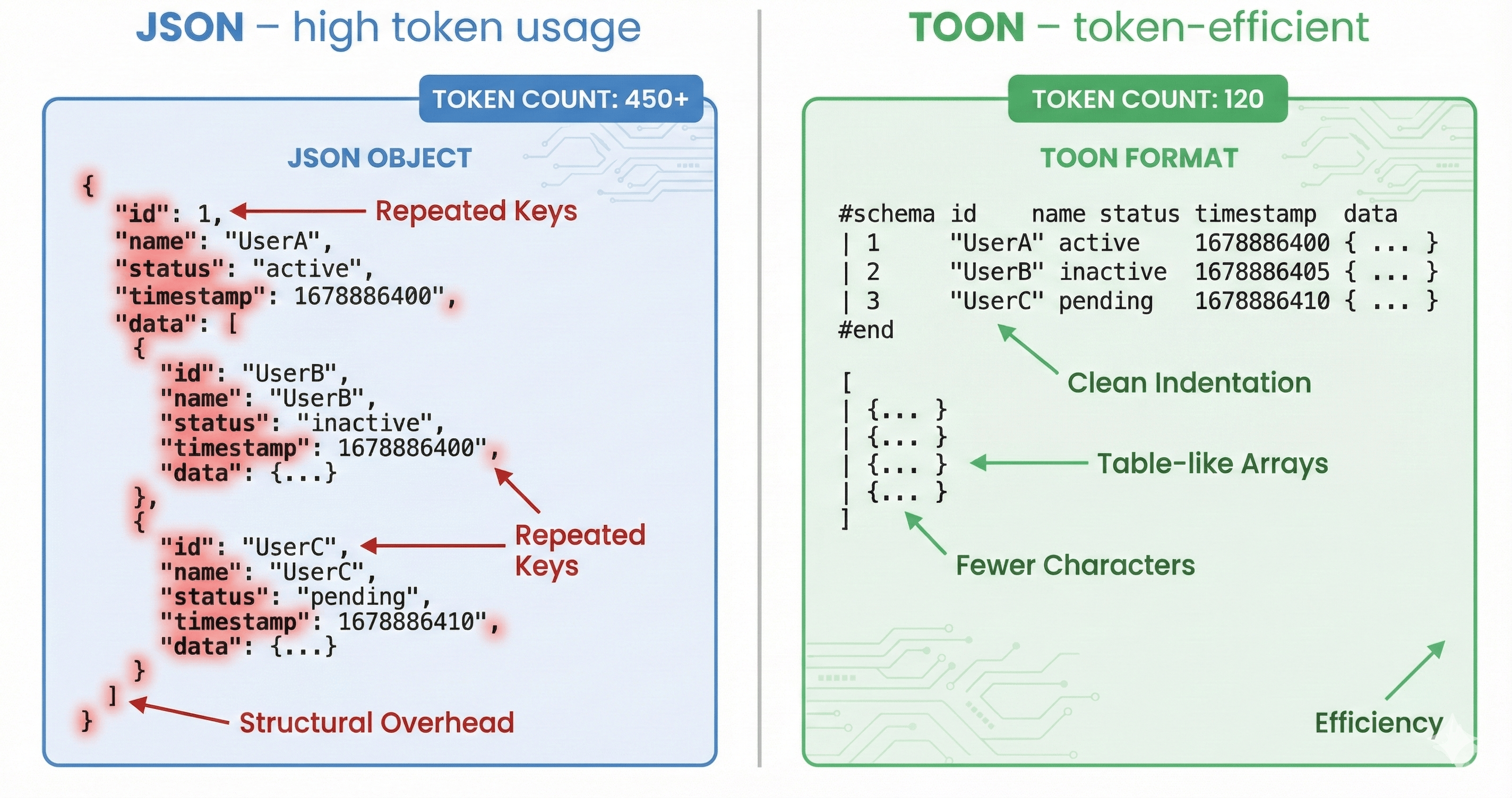

In the age of AI and large language models (LLMs), structured data is everywhere, and JSON has long been the standard for organizing it. But when feeding JSON directly into LLM prompts, every brace, quote, and repeated key adds extra tokens—slowing responses, increasing costs, and reducing usable context. That’s where TOON format comes in.

By using a JSON to TOON converter, you can transform standard JSON into a TOON format that is compact, human-readable, and optimized for LLM prompts. This approach reduces token usage, improves model comprehension, and streamlines AI workflows. Whether you’re handling user data, product catalogs, or event logs, adopting JSON TOON ensures faster, more efficient AI processing while keeping your data structured.

In this article, we’ll explore why JSON to TOON format is the future for LLM prompts, provide real-world examples, and show how the TOON format beats JSON in efficiency, readability, and AI performance.

TOON ( Token- oriented Object Notation) is a toon format format designed for one purpose:

minimizing token usage when sending structured data to large language models (LLMs).

Unlike traditional JSON—which waste excessively on braces, quotes, and punctuation.

TOON removes unnecessary symbols such as:

Instead, TOON uses a clean, table-like structure where:

id | name | role | status

101 | Sarah | admin | active

102 | Omar | editor | inactive

103 | Lily | admin | active

[

{ "id": 101, "name": "Sarah", "role": "admin", "status": "active" },

{ "id": 102, "name": "Omar", "role": "editor", "status": "inactive" },

{ "id": 103, "name": "Lily", "role": "admin", "status": "active" }

]

Even minified JSON is still much heavier than TOON.

JSON was built for machine-to-machine communication—not for tokenized language models. LLMs tokenize every little character, meaning JSON’s structure becomes expensive noise.

LLMs prefer dense, signal-rich input, and JSON is full of structural “noise”.

The AI community realized that traditional formats like JSON and YAML are not optimized for LLM tokenization. Their syntax-heavy structure becomes a bottleneck when working with large datasets.

TOON was created to solve exactly these problems:

It's not a replacement for JSON everywhere—it's a prompt-level optimization layer.

Add row aboveAdd row belowDelete rowAdd column to leftAdd column to rightDelete columnFeatureJSONTOONQuotesRequiredeverywhereNoneBraces {}RequiredNoneCommasRequiredNoneRepeated KeysAlways repeatedDeclared onceIn headerToken CostHighLowHumanReadabilityMediumHigh (table-like)Optimized forLLMs No YesFormat StyleVerbose syntaxClean and minimal

Add row aboveAdd row belowDelete rowAdd column to leftAdd column to rightDelete columnFeatureTOONJSONTokenEfficiency30–60% fewer tokens onUniform datasetsVerbose syntaxinflates tokensReadabilityHuman-friendly, table-likelayoutHeavy structuralpunctuationIdeal UseCaseLarge uniform arrays(products, logs, RAG chunks)Highly nested orirregular dataData Fidelity100% lossless whenconverted with toolsNative storageformatEcosystemEmergingUniversalPerformanceIn LLMsHigher parsing accuracyMore confusion +structural noiseLimitationsNot ideal for deeply nesteddataHandles nestingwell

Because:

For large prompts, this is a massive saving.

Savings:~70% tokens

Python developers can easily convert JSON to TOON format using popular libraries. Here’s a simple example:

import json

from jsontotoon import convert_to_toon

# Sample JSON

#Convert JSON to TOON

This snippet demonstrates how Python can handle json to toon conversion seamlessly, preserving data while reducing token usage for LLM prompts.

Token counts vary by tokenizer; these are approximations for illustration.

And this scales massively when you have hundreds or thousands of rows.

Using TOON is not just about token reduction. It directly improves model comprehension and reasoning.

LLMs get confused by JSON for three main reasons:

When you provide JSON to an LLM, it has to do two jobs:

But JSON’s structure includes tons of extra tokens:

These extra tokens distract the model from the content that matters.

TOON removes the noise and gives the model the important part:

the values.

This makes LLM parsing:

In tests, LLMs produce significantly higher accuracy when extracting or transforming TOON vs JSON data.

TOON is extremely powerful for RAG pipelines.

Because you can fit more documents inside the same context window.

(40% wasted on structure)

(Only 5–8% structural overhead)

This means:

This is a direct performance boost.

Let’s be practical. TOON is not for everything.

Here’s the correct strategy:

If it looks like a table → TOON wins.

JSON format is still perfect for:

TOON is not a general-purpose replacement.

Converting product lists from JSON to TOON saves tokens while sending large inventories to AI models for recommendation, summarization, or data enrichment.

For customer support or CRM systems, converting user arrays into TOON format reduces token bloat, enabling faster LLM responses and improved analytics.

Massive transaction logs can be compressed into TOON tables. This saves costs while ensuring precise structured data is available for AI fraud detection or reporting.

Chatbots and conversation analytics benefit from json to toon converter online by representing dialogue logs in TOON format, reducing token usage while preserving structure.

TOON can be used for API communication, but with a few practical considerations. Since TOON is a human-readable, indentation-based data format designed to reduce token usage for LLMs, it isn’t a drop-in replacement for JSON in traditional APIs. However, it can play a valuable role depending on how your system is structured.

This is the most powerful modern AI architecture:

Your databases, APIs, and services stay in JSON.

Right before sending data to an LLM:

Tell the model:

The following data is in TOON format. The first line is the header.

4. LLM Output Layer

You can even ask the model to output back in TOON for consistency.

This hybrid approach gives you:

Best of both worlds.

You can convert automatically using these steps:

This is what the TOON output looks like after converting JSON using JSON to TOON

You’re now ready to use the TOON data in your LLM prompts or AI workflows.

Tokens are too expensive to waste.

JSON’s verbose structure introduces:

TOON is not here to replace JSON universally—but it is the right tool for the LLM era.

If you handle large structured prompts, you’re leaving money and performance on the table by sticking to JSON.

Take this quick test:

You should see:

Json to toon is a tool to convert Jason data into TOON (token oriented object notation). It removes extra syntax like braces, brackets, and unneeded quotes. Keeping the structure intact through indentation. The converter uses a deterministic algorithm for consistent results. All processing happens locally in your browser for maximum speed and security.

Benchmarks confirm that a JSON-to-TOON conversion can cut token usage by 30–60%, especially for complex JSON structures.

3. Can LLMs understand TOON easily?

Yes. LLMs read TOON more easily than JSON because it has less “noise” and more meaningful signal.

Yes, our JSON to TOON converter is completely free with no limitations. You can convert unlimited JSON data without signup or subscription. The tool runs entirely in your browser, so there are no server costs or rate limits. Is TOON safe for sensitive data?

Yes. It’s purely a formatting style—no security risks.

No. JSON remains essential for APIs, storage, and complex data. TOON is a prompt optimization format, not a universal replacement.

All major LLMs—GPT-4, Claude, Gemini, and more—fully support TOON output from our JSON-to-TOON converter. With its clean, YAML-like syntax, TOON is easy for models to process. Benchmarks show 86.6% accuracy with TOON vs 83.2% with JSON. Proving converter not only saves tokens but also enhances model performance. TOON works directly in prompts—no special instructions needed.